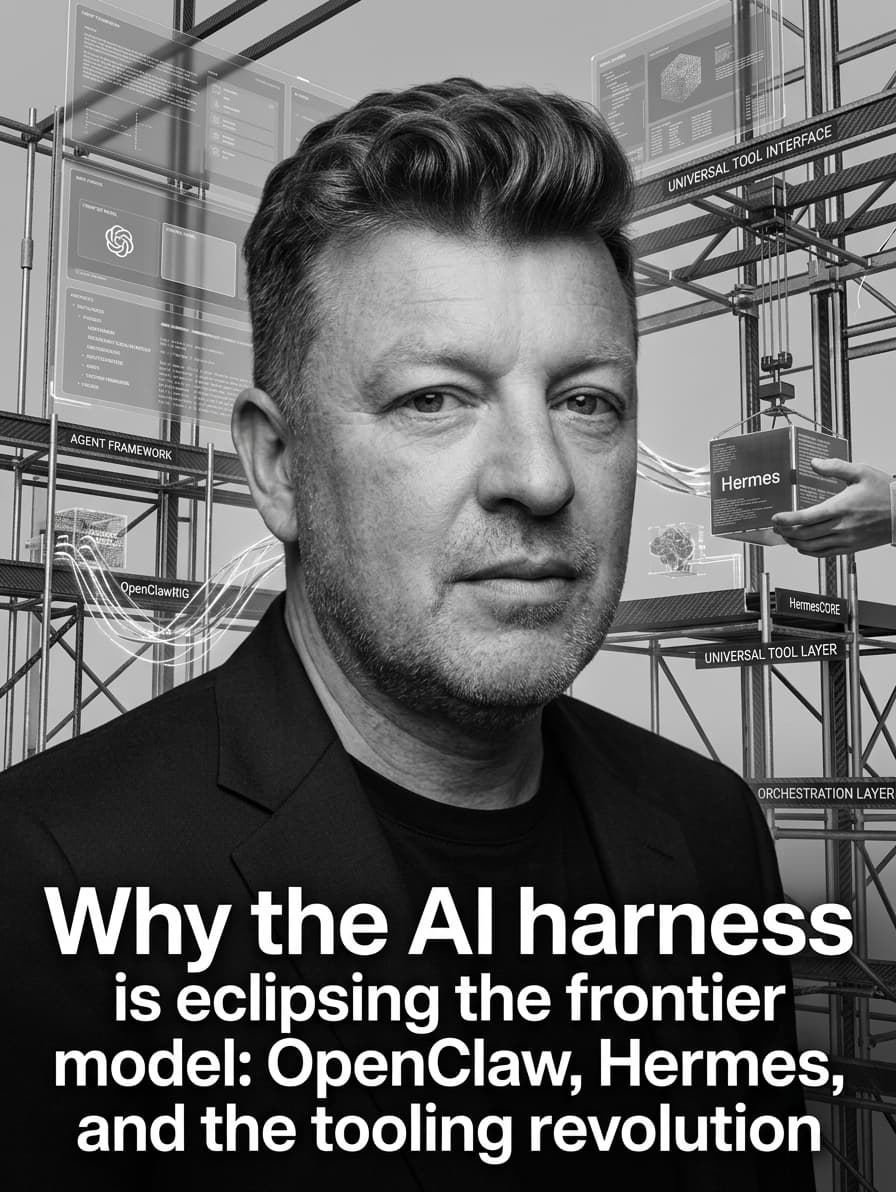

Why the AI harness is eclipsing the frontier model: OpenClaw, Hermes, and the tooling revolution

Frontier models get the headlines, but the real breakthrough of early 2026 is the orchestration layer. Agent frameworks and universal tool interfaces are fundamentally changing how AI operates software.

Why the AI harness is eclipsing the frontier model

If you look at the last six months of AI development, the narrative has subtly shifted. We are still tracking the parameter counts and context windows of frontier models, but the actual capability leaps are happening at the orchestration layer.

The industry has realized that a hyper-intelligent language model stranded in a chat interface is fundamentally limited. To do real work, a model needs a harness—a persistent execution environment that handles memory, tool routing, and application interfaces. In early 2026, the frameworks wrapping the models became as important, if not more important, than the models themselves.

The proprietary workspace: Claude, Perplexity, and the desktop

The push toward agent-native workspaces started with the major labs moving beyond the browser tab. Anthropic's rollout of Claude computer use and Claude Code in late March 2026 marked a transition from a conversational assistant to a system that drives your operating system. It executes terminal commands, reads your local file system, and manipulates applications directly, functioning like an autonomous digital coworker.

Similarly, Perplexity Computer evolved from a standard answer engine into an autonomous research layer. The platform heavily utilizes Nvidia's Nemotron 3 Super models to run complex, multi-step web and data operations securely on the desktop.

These proprietary ecosystems proved the concept: an AI that controls the computer is exponentially more useful than an AI that just generates text. But building custom enterprise logic on top of locked-down, vendor-specific workspaces remains difficult.

OpenClaw and NemoClaw: The open-source AI OS

The desire for a vendor-agnostic execution environment led directly to the explosion of OpenClaw. Originally launched in late 2025 and exploding in popularity by early 2026, OpenClaw rapidly became the standard open-source "operating system" for agentic AI. It provides a local, scriptable runtime that connects any LLM (Claude, GPT, or local weights) to your local tools and file systems.

The architecture is model-agnostic and relies on a robust permissions and policy engine, allowing developers to build specialized agents without being tethered to a single provider's UX.

The importance of this architecture was validated in March 2026 when Nvidia announced NemoClaw at GTC. NemoClaw wraps OpenClaw in enterprise-grade security policies and bundles it with Nvidia's Nemotron models. By offering a turnkey, secure OpenClaw deployment for GPU datacenters, Nvidia effectively standardized the open agent stack for the enterprise.

Hermes: Memory and self-improving skills

While OpenClaw provided the operating system, Nous Research's Hermes Agent—released to wide acclaim in March 2026—introduced a breakthrough in how local agents handle state and procedural knowledge.

Running locally on a standard VPS or desktop, Hermes operates through a standard ReAct (Reasoning and Acting) loop, but its defining feature is its memory architecture:

-

Persistent Memory: It uses external memory providers, such as Mem0, to autonomously extract and retain facts, preferences, and environmental context across completely separate sessions.

-

Skill Generation: When Hermes successfully completes a complex, multi-step task, it doesn't just forget the process. It writes a reusable Python script—a "skill"—and saves it to its local library.

Instead of relying on a human to write rigid bash scripts, Hermes uses local models to generate, test, and save Python-based procedures. The longer you run the agent, the faster it executes repetitive tasks, effectively self-improving its own toolset over time.

CLI-Anything: Making all software agent-native

The final piece of the puzzle is the interface between the agent and legacy software. Historically, agents had to rely on brittle GUI automation or manually written API wrappers to control applications like Blender, GIMP, or LibreOffice.

In March 2026, an open-source framework called CLI-Anything solved this translation problem. Operating as a Claude Code plugin or standalone tool, CLI-Anything analyzes a software's source code using a 7-step automated pipeline to generate a complete, production-ready Python command-line interface for it.

Instead of teaching an AI to click buttons in a complex GUI, CLI-Anything provides the agent with structured, predictable terminal commands that return JSON. This transforms human-designed legacy software into an agent-native tool. For builders, this means you can instantly connect OpenClaw or Hermes to complex desktop applications without writing a single line of integration code.

The engine vs. the transmission

Frontier models like Claude Opus 4.6 or the leaked Mythos model are the engine block of the AI transition. They provide the raw reasoning horsepower.

But the engine can't turn the wheels without a transmission. The real paradigm shift of 2026 is that developers now have a mature, open-source transmission stack: OpenClaw for the runtime, Hermes for persistent memory and skill generation, and CLI-Anything for universal software control. Harnessing the model is no longer a bottleneck; it is the primary vector for building autonomous software.